From Code Assistants to Autonomous Agents

Accelerate Chicago 2026What you'll walk away with:

- A repeatable approach to directing AI agents through architecture-level changes

- Hands-on experience with coding agents, MCP servers, and subagent orchestration

- An understanding of how orchestration-layer agents work and how to start building your own

- Patterns for voice-first and async AI workflows that untether you from the terminal

- Practical mental models for managing autonomy, trust, and risk in agentic systems

Who this is for:

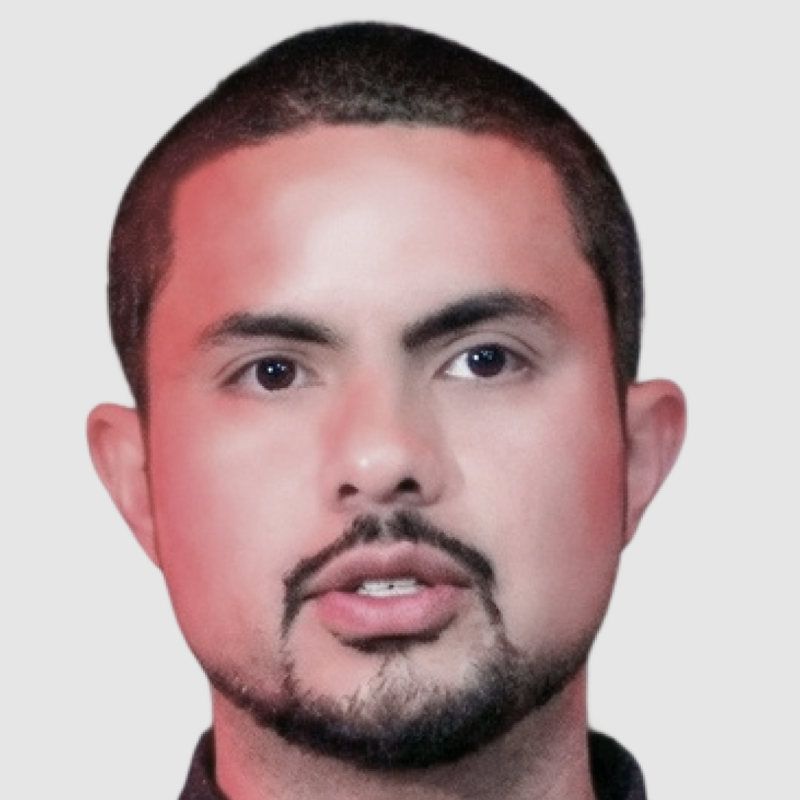

Senior engineers, tech leads, and architects (5+ years) who use AI tools daily and suspect there's a much bigger gear shift ahead.

Most developers have gotten comfortable with AI autocomplete. A few have started directing coding agents through multi-file changes. Hardly anyone is working at the level that comes next, where the line between thinking about software and building it starts to blur.

This full-day masterclass covers the full abstraction ladder of AI-assisted development:

Level 1: IDE-Integrated Assistants. GitHub Copilot, Cursor, Cody, and similar tools have gotten very good. Code generation, inline chat, early agentic features. We'll look at what they do well and where they hit a natural ceiling: the editor window. They don't know about your Slack threads, your deploy pipeline, or your team's architectural decisions from last quarter.

Level 2: Coding Agents. Tools like Claude Code that understand your whole codebase, coordinate changes across dozens of files, and execute plans on their own. You'll get hands-on with agentic coding workflows, the Core Four (Context, Model, Prompt, Tools), MCP server integration, and multi-agent orchestration.

Level 3: Orchestration Agents. The layer above coding agents. Systems that manage your tools, communications, memory, and workflows across Slack, GitHub, calendars, and APIs. At Spantree, our engineers dispatch coding sessions from autonomous agents that hold long-term context, triage notifications, draft pull requests, scaffold documentation sites, and ship deployable artifacts. Sometimes the whole thing starts with a voice memo recorded at the gym.

That voice memo thing isn't a party trick. When your agent understands your codebase, your calendar, your team's Slack channels, and your architectural preferences, the keyboard becomes optional. You think out loud. Software happens.

We'll also be honest about the tradeoffs: trust boundaries, prompt injection risks, knowing when to let the agent run and when to pull back. This workshop comes from daily production use across an entire engineering consultancy, not a research lab.